The Fast Turnaround (FT) observing mode allows the user the possibility of converting an idea into data much more quickly than the regular semester process (monthly proposal submission and assessment, with data obtained for accepted proposals 1-4 months after the submission deadline).

FT proposals do NOT need to be urgent to qualify; they just need to be good. Consider using FT rather than the regular semester process for:

- short, self-contained projects that will result in publications

- pilot/feasibility studies,

- following up unusual/unexpected astronomical events,

- speculative/high risk - high reward observations of fairly short duration,

- completion of a thesis project, when only a few short observations are needed, and

- completion of an existing data set to allow publication, when only a few short observations are needed.

However, users may propose for any kind of project that they believe is suited to the program.

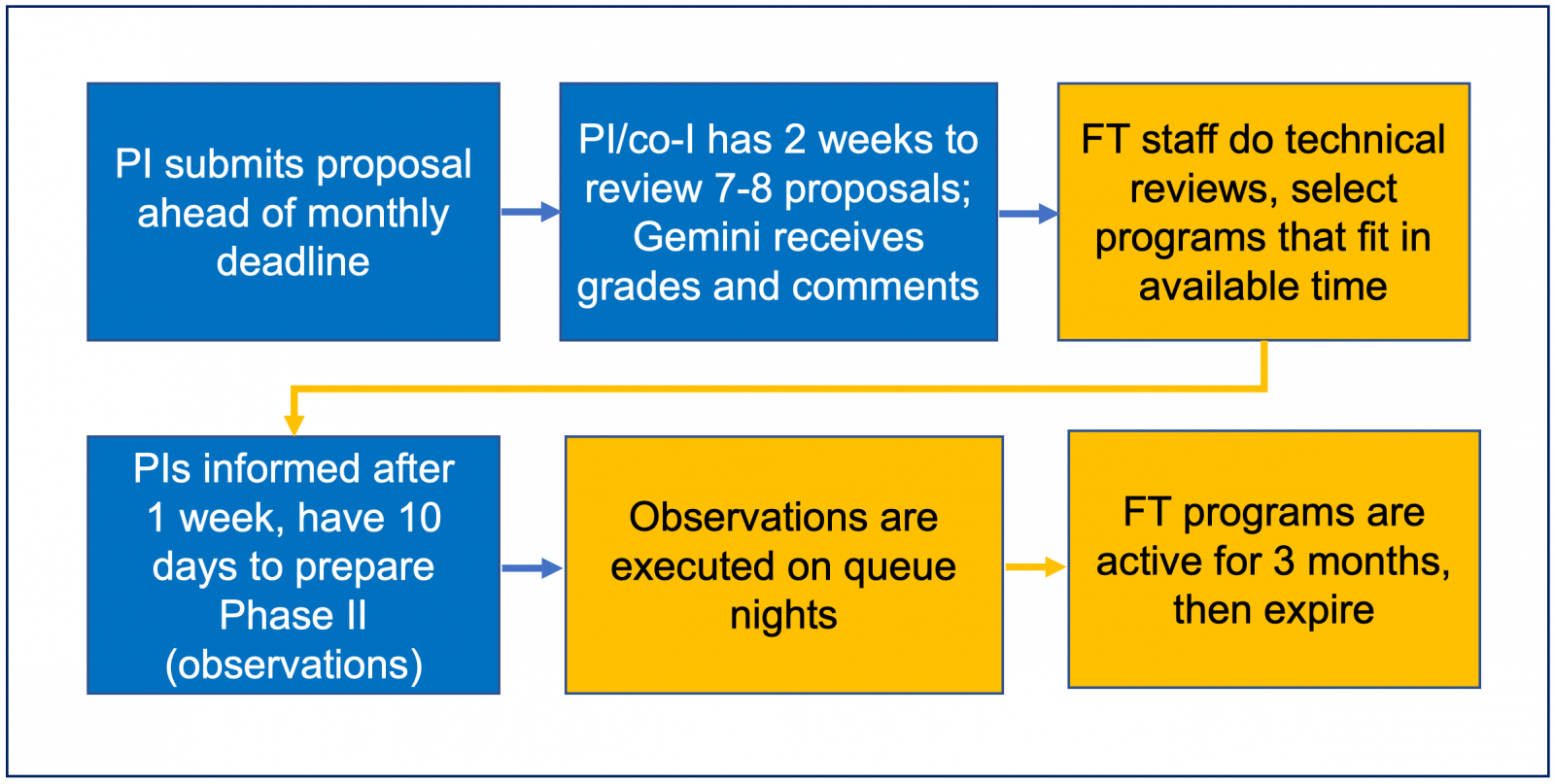

The FT program is run by a team of Gemini staff scientists and relies on the existing Phase I and Phase II tools, automated interactions between the proposers and Gemini, and rapid review of proposals by PIs or co-Is of other proposals submitted during the same round.

Approximately 10% of the time at both Gemini North and South is currently available for the program. PIs affiliated with the participating partners (rule #4) may submit FT proposals, but the total FT time per partner may not exceed that partner's 10% limit per semester (rule #4).

The total amount of time awarded each month to FT programs will not exceed 20 hours per telescope.

Submitted proposals are scientifically reviewed and graded by the PIs and/or co-Is of other proposals submitted during that round. Thus, submission of a proposal commits the PI or a designated co-I to review other proposals (typically 8) during that round. Any student on the proposal (PI or Co-I) may be designated as the reviewer. In this case, a co-I (who has a PhD) must oversee the reviewing process. Failure to submit all reviews within two weeks will result in the PI’s own proposal being removed from consideration for that round. Reviewers should be aware that they will be requested to assess proposals in a wide range of scientific areas, and should make sure that their own proposals are accessible to people working on many different fields of astronomy.

- Current call for proposals

- Recent news

To receive monthly deadline reminders and news of changes to the program that may affect or interest potential users, send a message to "Gemini-FT-reminders+subscribe" at gemini dot edu.

Overview of the Fast Turnaround Program

A call for FT proposals is issued each month with a deadline of the last day of that month at 12:00pm (noon) Hawaii Standard Time. Proposers use the Gemini Phase I Tool for their applications. During the first half of following month the proposal is reviewed and graded for scientific merit, as described below. Highly-ranked proposals are technically assessed by the FT Team. The PIs of successful proposals are notified by the 21st of that month and have 10 days to complete their Phase IIs (with assistance from the FT team as necessary).

Each review entails providing a numerical grade between 4 (excellent) and 0 (proposal has serious flaws) and a brief written assessment, according to the proposal assessment criteria (see related section below). The goal is to have at least five reviewers for each proposal, whose identities are retained in a confidential database. The Fast Turnaround Team oversees the process and the final selection of accepted proposals, which consists of the highest-ranked programs that are technically feasible and fit in the available observing time. Time is only granted to proposals that achieve a mean grade of 2.0 or better. Because of the short timescales involved, proposals with technical problems will be rejected outright (but may be corrected and resubmitted during a subsequent cycle).

Any submitted proposal must be executable in its entirety during the three months following the Phase II deadline, 1-4 months after the proposal deadline, or it will be rejected for that round, i.e., each target must be accessible for its specified observing time at some time during that 3-month period. In addition, it is required that the earliest targets be observable during the first month that the program is active (1-2 months after the proposal deadline).

FT programs that are accepted and whose Phase2s are prepared in time become part of the regular queue. Accepted programs that are either not executed or only partly executed will be deactivated 4 months after the proposal deadline (3 months after the Phase II deadline).

There are some restrictions on observing modes and instrument configurations, as well as on targets of opportunity. Specific restrictions will be given in each call for proposals. "Complex" or "non-standard" observing modes will not be supported in the early stages of the program; examples of such modes are given on the Q&A page. Prospective users are encouraged to contact the FT team (Fast.Turnaround at gemini.edu) in advance if they are not sure whether their observing mode can be supported.

To encourage rapid publication, the proprietary period on Fast Turnaround data is limited to 6 months. Note that this is much shorter than the 12 months allowed for data collected in other observing modes. Data can be obtained from the archive in the same way as for any other programs.

The progress of the program will be regularly communicated to the public via observing reports and is regularly evaluated by the FT team and by the Gemini Board. Comments and suggestions by users or potential users of the program are welcome (send to Fast.Turnaround at gemini.edu).

Rules

1. All participants are expected to behave in an ethical manner. Although the reviewers assigned to each proposal will not be disclosed to PIs, their grades and written reviews will be kept in a database at Gemini and may be used as part of the internal assessment and review of the Fast Turnaround program. Starting from February 2021, a dual anonymous review process (DARP) was implemented. Identities of PIs will not be disclosed to reviewers.

2. All proposals must use the current Fast Turnaournd DARP template. Proposals using older versions or regular Queue templates will be rejected. Furthermore, all proposals must comply with the DARP rules or be subject to Directorate review. Finally, all published deadlines in the Call for Proposals must be met by the proposer(s) or the proposal will be removed from consideration for the current round.

3. By submitting a proposal, the PI or the nominated co-I is committing to providing ratings and brief written assessments of as many as 8 other proposals submitted during the same round

(a) Failure to provide all requested ratings and written assessments before the cutoff date and time will result in the PI’s proposal being automatically removed from further consideration.

(b) The PI may delegate the ranking and assessment of the other proposals to a single co-investigator on his/her proposal. That person must be named in the relevant field in the PIT. If no name is provided, the reviewer will default to being the PI. Changes to the reviewer may not be made after the proposal deadline.

(c) A different assessor must be designated for each submitted proposal in a given round. A PI is allowed to submit more than one proposal per round, but only if this rule is followed. If it is not followed the proposal will be rejected for that round.

(d) The title and abstract of each proposal will be provided to a number of potential reviewers, in order for conflicts of interest to be identified prior to review.

(e) The reviewers must identify and declare conflicts of interest. They must also agree to keep proposals confidential and use them only for the purpose of providing the assessments. Only the designated reviewer will have access to the proposals. With the exception of students and their mentors, reviewers must not discuss any aspect of the proposals with anyone else, including other known reviewers in the same cycle.

(f) Reviewers should try to use the full range of scores if possible. However, if none are particularly poor or excellent, the scores should at least span the range 1-3.

4. PIs of FT proposals must be affiliated with the following partner countries or institutions: USA (including Gemini staff), Canada, Argentina, Brazil, Korea, or Japan. Chile has withdrawn from Fast Turnaround starting in 2021A. UH has withdrawn from Fast Turnaround starting in 24A. The US "open skies" policy does not apply to the Fast Turnaround program. Specific conditions apply; see the "Detailed eligibility" section below. The FT program is closed to applicants from a given partner once that partner has used up its Gemini FT share for the semester. Approximate FT time available per semester per telescope is:

- USA: 85 hrs (each telescope)

- Canada: 22 hrs (each telescope)

- Argentina: 2 hrs (each telescope)

- Brazil: 7 hrs (each telescope)

- Korea: 4 hrs (each telescope)

- University of Hawaii: Must use US time, and for Gemini South only

Note that if a partner uses up their full allocation at one telescope, up to 3 hrs may be tranferred from remaining time at the other telescope if time for the fully subscribed telescope is requested in a successful proposal. This is meant to help the smaller partners better make use of their time. This means, for example, that a PI from AR could apply, in a single proposal, for all 4h at either GN or GS if all 4h still remains, or, if KR has used all available time at GS, but has > 3 h remaining at GN, it would still be possible for a KR PI to apply for up to 3h of time at GS.

5. A graduate student may be the PI of a proposal, but must nominate a PhD co-investigator to act as a “Mentor” for the reviews. More information on the Mentor role is provided here. The mentor’s conflicts of interest must be declared on the form received by the PI, and the mentor is bound by the same terms as the PI.

6. Repeat submissions are permitted, as are submissions of proposals previously rejected by other time allocation committees. Each submission implies agreement to review other proposals, regardless of whether the proposal was also submitted during a previous round.

7. Proposals for non-standard observing modes/instrument configurations may not be accepted. Prospective users should be aware of the list of unavailable observing modes given in the call for proposals, and contact the Fast Turnaround Team in advance if they are considering something unusual. Otherwise, the technical assessor will decide later whether that mode/configuration can be supported.

8. Proposals must not request more time than the amount available in the most restrictive weather condition bin. For example, with the 20 hours per month per telescope currently being made available, the 50-percentile WV bin for each telescope will contain 10 hours. A proposal for WV50 time with all other weather conditions less restrictive should then not request more than 10 hours in total. Likewise a CC50 IQ70 WV80 proposal with sky brightness unspecified also should not request more than 10 hours. A proposal requesting IQ20 should not request more than 4 hours.

9. One or more targets must be observable starting one month after the proposal deadline (the current CfP will give the acceptable RA range). If the requested instrument is not available at that time, the first target(s) must be observable on the first night that the requested instrument is scheduled for use.

10. In cases where more than one proposal requests similar observations of the same object, deference will be given to the already accepted program (whether FT, queue, classical, etc.) if it exists. Otherwise deference will be given to the proposal receiving the highest grade.

11. Both Standard and rapid targets-of-opportunity are now accepted through the FT program. Note, however, that highly time-constrained programs may not be accepted if they conflict with existing time-critical observations. Feel free to contact us in advance of proposing if your time-critical observations may fall into this category.

12. GMOS MOS programs are allowed. Because masks are currently cut at each site (no shipping needed), we now allow preimaging. However, the target must be available for preimaging early in the awarded cycle and well visible for the rest of the active cycle so the MOS observation can be completed. We strongly recommend to design masks based on catalogs however, without preimaging, since we cannot guarantee that preimaging will be taken early on in the cycle. We also strongly recommend that masks are designed ASAP since there is still overhead for checking and cutting the masks of at least 1-2 weeks.

13. Because of the “rapid response” nature of the program, proposals with technical problems, or those for which technical feasibility cannot be established, will be rejected outright (but may be corrected/clarified and resubmitted at a future deadline).

14. When <7 proposals are received in any month we will also request reviews from a standing panel of external reviewers. PIs (or their designated co-Is) will still review each other's proposals; we will simply include scores from the external assessors as well as those from people who have submitted proposals that month.

15. FT proposals will be ranked by their mean score and time will be allocated in this order, taking into consideration the available time and likely weather conditions. In the event of a tie between two or more proposals, the clipped mean (the mean excluding the single top and bottom grades) will be used to decide the ranking. Proposals must achieve a final (mean) grade of 2.0 or above in order to be awarded time. This implies that there may be occasions when the full amount of FT time available for a given month is not allocated even when more than the full amount of time was requested.

16. If a program is accepted, the observations must be ready (i.e., Phase II completed) by approximately 10 days after the notifications are sent (each Call for Proposals will show the specific dates).

17. Accepted programs will be active for four months from the deadline (i.e., they expire after 3 months in the FT queue).

18. The PI of each proposal will receive the written reviews, the proposal's final score, and information about target conflicts. A technical assessment will be provided for accepted proposals and for any proposal that was rejected on the basis of the technical review.

19. For accepted proposals, the proposal title, PI's name, time request, rough target coordinates (hrs:mins, deg), instrument and observing conditions will be made public.

20. Violation of rule 3e, or repeated failure to provide useful rankings and reviews, will result in penalties including being prohibited from submitting to the Fast Turnaround program. A PI should contact the Fast Turnaround Team (Fast.Turnaround at gemini.edu) if she or he believes that the ideas in her/his proposal have been co-opted or shared with others by a reviewer. Disputes will be arbitrated by the Gemini director.

Detailed eligibility rules

Each partner defines eligibility for their own community FT time. Eligibility to participate in FT is generally the same as Gemini partner access. One important exception is that US "open skies" policy does not apply, and US FT PIs must be affiliated with a US institution. Japanese community PIs, including Subaru staff, are eligible for Japan (JP) time north and south and should select this option. University of Hawaii PIs may no longer apply for time through FT at Gemini North. They remain eligible for US programs at Gemini South. Other Research Corporation of the University of Hawaii staff are eligible as US PIs, both north and south.

DARP (Dual Anonymous Review Process)

DARP was implemented to improve fairness in the review process and reduce the impact of unconscious bias.

This process was started in the Feb 2021 FastTurnaround CfP. As of Feb 2022, all proposals must be submitted using the correct DARP templates and must follow all DARP rules. Non-compliant proposals will be removed from consideration. We ask Reviewers to help us make this review process a success. Please do NOT downgrade proposals for violating the rules of DARP. Instead, please note in your reviews any violations you find. The FT team will look these over and forward any actual violations to the Directorate for review. Also, please provide any feedback you have on this DARP process.

RULES for submitted proposals

Don’t:

- Avoid mentioning names and affiliations of the team in the PDF attachment that could be used to identify the proposing team.

- Don’t provide Program IDs for previous observations led by the team

- Avoid claiming ownership of past work. (E.g., “my successful Gemini program in the previous semester (GS-18A-xxx)”, or “our analysis shown in Doe et al. 2020…”)

- Do not make statements like “this is a student-led proposal”, or “we are an international team” etc

Do:

- Cite references in passive third person, e.g., “Analysis shown in Doe et al. 2020”, including references to data and software.

- Describe the proposed work, e.g., “We propose to do the following…”, or “We will measure the effects of …”

- Unpublished work can be referred to as “obtained in private communication” or “from private consultation”.

- You may mention that the proposal relates to thesis or other student research.

- You may mention that the proposal builds upon previous work/observations (just don’t provide references or program IDs).

- You may include text in proposals mentioning previous use of Gemini, as long as that work is not referenced and program IDs are not listed. However, do not make it easy for reviewers to track down your identity.

RULES for Reviewers

- Accept the assigned proposals based on abstracts whether you can provide an unbiased review or not. As a reminder, not being familiar with a subject is not a valid reason for rejecting a proposal.

- Review proposals solely based on the scientific merit of what is proposed.

- Do not spend any time attempting to identify the PI or the team. Even if you think you know, you can be wrong.

- Utilize neutral pronouns (they/the PI/the team) when you write comments.

- Be aware that not every PI of a Gemini proposal is a native English speaker. Don't sssume that occasional grammatical mistakes are due to carelessness on the part of the PI, don't downgrade for that.

- Flag the proposals that have not been sufficiently anonymized but DO NOT penalize them by lowering grades. (The FT support team will check the flagged proposals and adjust grades only if necessary.)

See also this PDF presentation.

Conflict Criteria

If you are a designated a Reviewer for a fast turnaround proposal, you will be given a list of proposals to review. You will at first see only the title and abstract for each proposal, and you will have to indicate whether or not you feel you can provide an unbiased review of each one. In cases where you do not believe you can provide a fair review, you must briefly explain the reason for your response (and, optionally, also explain why you can give a fair review) .

Not being familiar with the subject area of a proposal is not considered a conflict of interest. With a fairly small pool of proposals and reviewers, almost everyone will be allocated proposals outside their area(s) of expertise. A good FT proposal will make an effort to persuade the reader that the proposed work is interesting and feasible.

Please click the Submit button after each entry. For each proposal you decline to review due to a valid conflict, you will receive a replacement. Once you have accepted all the proposals that are offered, click on the Start Review button to be given access to the proposal files and review form.

If you are a student reviewer with a PhD mentor, you must declare any conflict that applies to either you or your mentor on this page. Please work with your mentor to make sure that your replies are accurate.

Examples of acceptable conflicts:

- "This proposal overlaps in many key ways with research in which I am deeply involved."

- "For private/personal reasons, I do not feel that I can fairly review this proposal."

Not an acceptible conflict:

- I am not familiar with this field of research

Example of reviewer able to provide an unbiased review:

- "I have a previous publication on the same target/field but I no longer work on that subject."

- "I work in this field, but do not believe this conflicts with my work"

Proposal assessment criteria

Please rank the set of proposals assigned for review against each other, using the criteria provided below. Use as broad a range between 4 (excellent) - 0 (flawed) as makes sense for the set, assigning scores 1-3 as a minimum. Written assessments of Fast Turnaround proposals can be brief, but should address the strengths and weaknesses of each proposal (please avoid simply restating the proposal or giving only a single sentence that does not address specifics).

The proposals should be assessed to the best of the reviewer's ability using the following criteria, in descending order of importance:

- The overall scientific merit of the proposed investigation and its potential contribution to the advancement of scientific knowledge.

- Is the overall topic one of interest or of use to the astronomical community?

- Does the proposal clearly explain how important, outstanding questions in the relevant field will be addressed by the proposed observations?

- If the proposal is speculative, exploratory, or for a “pilot” study (all of which also are acceptable uses of the FT), is it well justified?

- The suitability of the experimental design to achieve the scientific goals.

- For example, is the sample well chosen? Will the expected signal-to-noise ratio and spectral resolution permit the relevant quantities to be measured?

- The likelihood of the proposed research being brought to a successful conclusion.

- The benefit of rapid response to the proposed program.

- Note that this is the least important assessment criterion. Programs that are urgent in some way (e.g., the target is setting) may be given a higher score. However, proposals that are not urgent should not be downgraded. In the review text there is no need to comment that a proposal is not urgent.

Technical feasibility of the proposed observations (e.g. whether the required S/N can be achieved in the stated exposure times, whether overheads have been correctly taken into account) will be determined by Gemini staff. In assigning grades to proposal reviewers should not take feasibility into consideration. However, comments on technical aspects of the proposals may be made if the reviewer so wishes.

How to write a useful review

Individual proposal reviews are provided, anonymously and unedited, to PIs. Thoughtful assessments can help PIs write stronger proposals in future. Avoid simply restating the proposal or using a single generic sentence. Be objective, professional, and courteous. Avoid derogatory and judgemental language. Provide both strengths and weaknesses for each proposal. Reviews should include constructive feedback for improving each proposal. Be mindful of the fact that FT PIs come from a number of different partner countries and span a range of career stages. Because not every PI of a Gemini proposal is a native English speaker, don’t assume that occasional grammatical mistakes are due to carelessness on the part of the PI and don't downgrade for that (you may point out such issues if this will help the PI for future proposals). After completing your reviews, read through them once more before final submission and consider, from the perspective of the recipients, if you would find your reviews helpful.

Below, we provide some guidelines to assist reviewers in writing useful comments (based partly on advice from the Spitzer Science Center to their assessors).

Basic guidelines

- Be specific. If there are particular things in the proposal that you know could be improved, then please provide specifics. This can be particularly helpful for proposals from post-docs and graduate students who may not yet have as much experience writing proposals as their more senior colleagues.

- Avoid simply summarizing the proposal. A concise overview is fine, but it should not constitute the entire set of comments.

- Be brief. There's no need to think of things to say if there really is not anything wrong with the proposal.

- Be accurate. If you aren't sure about something then don't write it down (or, at least, raise it as a question rather than a fact).

- Do not use slang or jargon. A poorly defined sample is just that, not a ‘grab bag’.

- Do not pose unanswerable questions. Proposers don’t want to read, ‘What were they thinking? How will they get the answer?’ Instead say that you thought the path to the science was unclear or describe what specifically was missing.

- Be courteous and professional. It's not appropriate to be insulting or incredulous even if you think a proposal could be greatly improved.

Example comments

These comments, mostly taken from the first round of FT proposal reviews, demonstrate the type of comments that can be useful to PIs.

- This proposal explains well how the data will be used to study the accretion rate evolution.

- More details on confronting data with explosion models would be helpful in explaining how these observations would uncover the underlying physics.

- The scientific goal is clearly presented. However, this assumes all the unexpected features in [the target] are universal among all Type Ia SNe, which does not seem to be strongly supported by evidence.

- The experimental design is well justified, aiming to both capture important features and to probe their evolution between the three requested epochs.

- Although a lot of information about the dust, the SFR etc. could be extracted, the link to the scientific goal of understanding the physics of the evolution of the Hubble sequence is not clearly established.

- The outstanding questions they will answer are clearly outlined, the target selection is well justified and the team has the expertise to reduce and analyse the data promptly.

- The authors clearly justify the need to get H alpha maps for their candidates. They explain clearly why the small scale star formation is an interesting question and how they will derive the extinction values.

- The authors do not explain in detail in which way having broad band filter images helps constrain the theoretical models

- The science case is interesting, but the proposal lacks a clear outline of what questions will be answered. For example, what new information will we learn from observing a third source? Will this allow us to decisively identify the nature of [this kind of object]?

- The proposal lists examples of several lines that may or may not be present in the spectrum, with no clear [diagnostic] that will unambiguously classify this source.

- The authors do not explain why their target is the 2nd best target out of ~70 known ULSs

- The method that will be used to separate the two scenarios is well described, and the description of the experimental design seems suitable to apply this method.

- The assumptions on the Ly-a luminosity are too optimistic. The assumed value is among the highest values to date.

- However, given the expected low flux in the lines and continuum, the proposal relies on the contribution from the hidden AGN suggested by the strong MIPS 24micron detection. This could be risky as hidden AGNs are difficult to detect, especially since extinction may be quite high [in] post-starburst galaxies.

- It is unclear to me why the team had settled on targets with low impact parameters as a pilot study, if the case at large impact parameters could test predictions from simulations.

- The proposal appears to ignore the extensive literature on

that already addresses some of the proposed science goals. Complementary data for the proposed observations seem rather limited. - Rather than make a comment such as "This proposal was written in haste, and, as such, did not clearly explain the path from observations to science", simply write that, "The proposal did not clearly explain the path from observations to science."

- Don't make statements such as "Generally it seems the proposal was rushed". Simply explain what was missing from the proposal.

Role of the Mentor for non-PhD reviewers

Students are strongly encouraged to be an active participant in proposal writing, proposal reviewing, and serving as a proposal PI. All of these are great ways to learn about and engage with one’s own science and the science that others are doing. As part of the FT process, researchers can hone their ability to write an effective proposal for a general scientific audience, both by writing their own proposal and having the opportunity to review examples of both poorly written and well written proposals from other astronomers. However, for reviewers that do not (yet) hold a PhD, it is extremely helpful to have a mentor to guide the evaluation process and to provide context and specificity for the reviews written for others and the reviews received. Therefore, if a student (or other researcher) without a PhD is assigned as a reviewer, we require that a mentor be involved in the review process. Note that the designated mentor does not need to be the student's actual advisor; anyone on the proposal who holds a PhD can serve as the mentor.

As a mentor, you are expected to:

- Log in to the FT system via the credentials received in the email from FastTurnaround (by the 2nd of the month) and read and sign the Ethical Participation Agreement. After discussing this agreement with the student, the student must also separately log in (with their own credentials) and sign this waiver.

- Work with the student to declare any conflicts of interest that pertain to either the student or mentor.

- Provide assistance to the student in reviewing the proposals, assigning grades, and writing reviews by discussing or providing examples of how to grade effectively and consistently (FT proposal assessment criteria can be found here). There is no TAC discussion as part of the FT review process, so you will be the primary resource for the student in terms of discussing the scientific content of the proposal. Note that only the student can enter scores and reviews.

- Review the student's final scores and reviews. Ensure that scores have a reasonable spread and reviews are sensible and will be useful to the recipients. If not, you are expected to discuss with the student how they might improve their scores/reviews.

- Work with the student after the results have been received to help interpret the reviews. Remember that weaknesses are encouraged to be provided in reviews, even when reviewers really like the proposal. If the proposal has not been awarded time, discuss, based on the reviews provided, how the proposal could be strengthened and resubmitted. Note that we highly encourage resubmissions of proposals that have addressed referee comments.

If the student has already been involved in the distributed review process on multiple occasions through FT or elsewhere, it is still expected that you will perform all of the duties listed above at some level in order to help students improve both their own proposals and the reviewing of those of others. For questions about any of this, please contact the Gemini FT staff at gem.fast-turnaround at noirlab.edu.

FAQs and Answers

On this page we attempt to answer the questions that have been raised as we have set up the Fast Turnaround program and discussed it with members of the Gemini community and their representatives. Please contact us as Fast.Turnaround at gemini.edu if you have questions that are not addressed here. In case of any conflicting information, the rules should be taken as definitive.

- What is the workflow and timeline?

- What if I submit after the deadline?

- What if I re-submit the same (but edited) proposal before the deadline?

- How does the review process work?

- How will my proposal be judged?

- Will my proposal really be rejected if I don't submit all my reviews on time?

- Who will see my proposal?

- How can you be sure the review process is fair?

- What determines which programs are accepted?

- How can graduate students be involved?

- Can I submit a proposal that requires really good conditions?

- How strict are the RA limits in the call for proposals?

- How much time can I ask for?

- What constitutes a "non-standard" instrument configuration or observing mode?

- What if someone proposes for observations that are already in the queue?

- How is it determined whether my accepted FT program is in Band 1 or Band 2? What about Band 3?

- How is FT different from Director's Discretionary Time?

- How is an FT program different from a Target of Opportunity program?

- How much time is being used for the FT program?

- Should I acknowledge the FT program? If so, how?

- How can I give feedback?

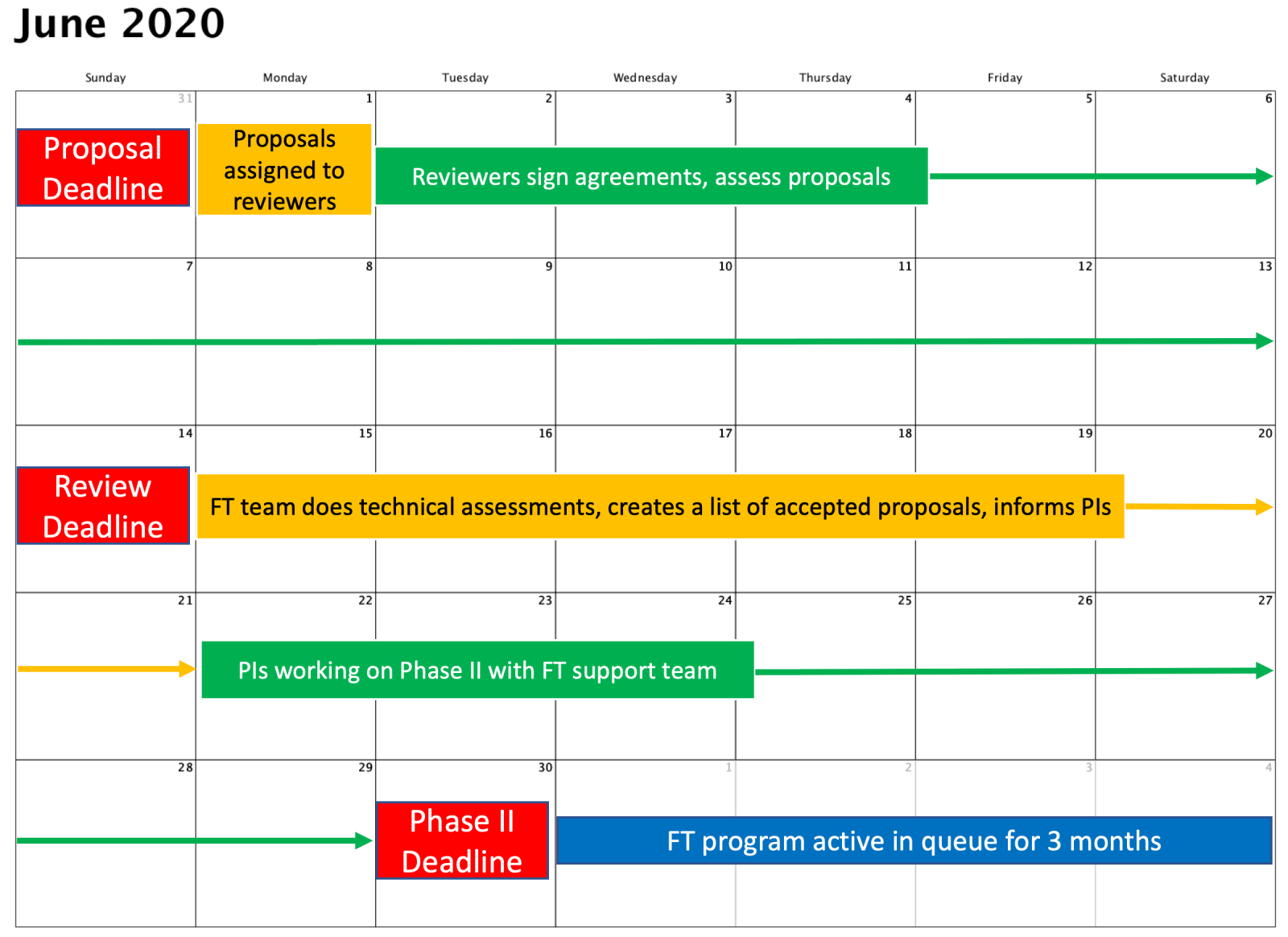

What is the overall workflow and timeline?

This graphic illustrates what will happen during one cycle in the FT program. Red boxes show fixed events (note that FT observations are no longer scheduled on fixed nights as shown in the figure), blue shows tasks done by the PI/co-I, and green shows work done by the FT support team at Gemini.

- The last day of each month (Month #0) at 11:59 pm Hawaiian Standard Time (HST): Proposal deadline.

- 1st of Month #1: Assignment of proposals for each PI or designated co-I to scientifically review.

- 14th of Month #1, 11:59pm HST: Deadline for PI/co-I to submit all reviews.

- 21st of Month #1: Gemini FT team completes technical assessments of scientifically highly ranked proposals, constructs the final lists of accepted programs, and advises each PI of the status of his/her proposal.

- 10 days after the 21st of Month #1 (i.e. end of Month #1 or very early Month #2): Deadline for completion of PhaseIIs.

- Months #2-4: Accepted proposals are "active" until the final night of Month #4; are inactive thereafter.

What if I submit after the deadline?

Immediately after each proposal deadline, the proposal handling software takes the submissions and begins the process of assigning reviewers. Proposals that are received after this process has started will take part in the next proposal cycle, at the end of the next month. If you submit just after a deadline and do not wish to take part in the next cycle, please contact us (fast.turnaround at gemini.edu) so we can delete your proposal. Proposals cannot be inserted into the active cycle after the deadline.

What if I re-submit the same (but edited) proposal before the deadline?

The FT team will manually remove older versions of the same proposal before beginning the review process. Repeated submissions can cause delays and problems, however, and we cannot guarantee that the right version will be used. We urge PIs to carefully review their proposals before submission so that re-submission is not needed.

How does the review process work?

- Immediately after each deadline, the proposal handling software assigns proposals to the reviewers named in the proposals (specified in the Phase 1 Tool). A keyword matching algorithm is used to attempt to assign proposals to reviewers working on related subjects, as far as possible. Ideally everyone will review eight proposals, but that number will be lower if fewer than nine proposals are received.

- Within a day or two after the proposal deadline, each reviewer is emailed the link to a page on which he or she must accept or decline the conditions of the FT program: confidentiality and using the proposals only for the purposes of the review. (If the reviewer does not receive that email by two days after the deadline, he or she should contact FT asap.) Once they have accepted the terms, reviewers are each taken to a page showing the titles, abstracts, and investigators of the proposals they have been assigned. They must then declare whether or not there is any reason why they cannot provide an unbiased review of each proposal. See this blog post for screenshots of this and other web pages/forms

- If any conflicts are declared, the reviewer is assigned replacement proposals. If not, the reviewer is sent the links to the proposals themselves, and to a review form. The review form asks for a grade and brief written assessment, along with the reviewer's assessment of her or his knowledge of the field of the proposal. The reviewer's self-assessment is not currently used to weight the scores, but may be used to assess how such a weighting would have changed the final grades and whether it should in fact be implemented.

- Immediately after the review deadline (currently the 14th of the month), the software looks for the review forms that have been submitted, takes the mean of the scores for each proposal, and creates ranked lists for each telescope that are then available to the FT support team. A simple mean grade is used; we have investigated the effect of using a clipped mean and found that it would not have changed the outcome of any of the proposal cycles so far.

How will my proposal be judged?

Reviewers should assess proposals according to these assessment criteria, giving them an absolute grade as described on the program overview page (see the screenshot of the proposal review form linked in the previous question). Please note that, although we attempt to assign proposals to reviewers working in related fields using the keywords selected by PIs in their proposals, it is very likely that at least some reviewers will have little knowledge of the subject of your proposal. Proposals that are written for a broad audience have a greater chance of being successful.

Will my proposal really be rejected if I don't submit all my reviews on time?

Yes.

Who will see my proposal?

Your proposal will be read by 5-8 reviewers (PIs or co-Is of other proposals submitted for the same deadline, plus members of a standing, on-call panel when few proposals are received, see Rule 13). If any of the reviewers is a PhD student, the proposal will also be accessible to a PhD "mentor", as described below. All of these people will be required to agree to the terms of the FT program. The FT support team at Gemini will have access to the proposals, to enable technical reviews to be performed and the final queue to be constructed. Finally, during the initial trial periodaccepted proposals were read by 1-2 members or former members of the Gemini National TACs. This is so they could judge how the quality of the proposals compares to those awarded time through the regular, semester-based process, which formed part of the assessment of the FT pilot program.

How can you be sure that the review process is fair?

Not surprisingly, this was the subject of much debate as we designed the FT program. We read the Merrifield & Saari (2009) paper on distributed peer review, followed the discussion of that paper on the Facebook Astronomers group, and dipped briefly into psychological studies of lying and cheating. We had much discussion with Gemini's users and advisory committees, tested the system on volunteers from the Canadian community (see these SPIE proceedings), and the program was reviewed by a panel of internal and external experts. In the end, we decided not build in numerical safeguards (hard to calibrate until we have some experience, may penalize inexperienced reviewers), and to start with a relatively simple system. During the initial trial period we monitored the review process closely and saw no obvious signs of bias or undesirable behavior. We continue to monitor the program. The FT program's peer review system will probably not appeal to everyone, but it should be seen as just one of a number of ways of gaining access to telescope time.

What determines which proposals are accepted?

The FT team selects the programs with the highest rankings from the peer-review process that are technically feasible and that will fit in the available time. We attempt to roughly fill the likely weather conditions and avoid accepting more observations than can be executed at a given RA. For example, if the two highest-ranked programs both require all of the 20 percentile image quality that is likely to be available (statistically speaking), then only one will be accepted. This does require some subjective decisions on the part of the FT team, but so far we have not had to reject highly-ranked programs for these reasons.

How can graduate students be involved?

We encourage graduate students to be involved in the FT program, and students may submit proposals as PI. When an FT proposal is submitted through the PIT, the PI or a co-I must be nominated as the person who will review the other proposals. If the reviewer is a graduate student, then a "mentor" must also be selected. The mentor will receive the same conflict of interest form as the student, and will also receive access to the proposals. The mentor is expected to provide whatever guidance is necessary for the student during the review process.

Can I submit a proposal that requests really good conditions?

You can, subject to the restriction that you don't request more time than is likely to be available in your most restrictive observing condition bin (see the Rules and the current Call for Proposals). However, given that the FT program can only allocate up to 20 hours per month, and does take the likelihood of various conditions occurring when allocating time, you will need to decide whether the risk of those conditions not occurring is worth the time you will put into writing the proposal, reviewing others, and setting up the observations.

How strict are the RA limits in the call for proposals?

We view the RA range as a guideline rather than as strict limits. If your observations are short and could be executed in the first or last few FT nights of the cycle, then targets a little outside the RA boundaries will be OK. On the other hand, if you submit many observations right at the edges and requiring very good conditions, that would count against your proposal as we attempt to select the highest-ranked programs that are actually feasible. We are deliberately not setting strict RA limits while we gain experience constructing a feasible FT queue each month; we’d rather leave it to users to decide what they think is worthwhile proposing for. We will adapt the program according to users’ feedback as we go along, though, so please contact us if you wish to provide feedback on this process.

How much time can I ask for?

The only restriction is that you don't request more time than is likely to be available in your (single) most restrictive observing condition bin (see the Rules and the current Call for Proposals). For example, if your proposal requires 85%-ile cloud cover but all other conditions (IQ, water vapor, sky background) are unrestricted, you could in principle request 85% of the time advertised in the CfP.

What constitutes a "non-standard" instrument configuration or observing mode?

This would include techniques like using the acquisition cameras to observe an occultation, or drift-scanning over a globular cluster with GMOS. While the Fast Turnaround team was set up partly to include a wide range of experience and expertise, we won't be able to support every conceivable observation on the short timescales involved. Rather than attempt to provide an exhaustive list of what can and cannot be proposed for through the FT program, we encourage users to contact us (fast.turnaround at gemini.edu) if they are considering anything out of the ordinary.

What if someone proposes for observations that are already in the queue?

As stated in the Rules, accepted programs have priority over new FT proposals. The phase I tool will warn if targets have already been observed by Gemini. This information will be in the pdf files that are available to reviewers, so proposers should give a clear justification of why the "duplicate" observations are necessary, or how they differ from those in the archive. Planned observations are trickier. We cannot make the target lists of accepted queue programs public, so the FT team will check for potential duplications in FT proposals before they are accepted. The titles and PIs of accepted Gemini programs are available here. If you are thinking of writing an FT proposal but suspect there may already be a program doing very similar observations, feel free to get in touch (fast.turnaround at gemini.edu).

How is it determined whether my accepted FT program is in Band 1 or Band 2? What about Band 3?

In the OT, you will see that your FT program has been assigned a band (1 or 2). In order that the queue coordinators have some way of distinguishing the relative priorities of FT programs, we have appropriated the usual ranking band system. FT programs are not assigned Band 3 (once FT is selected in the Phase I the Band 3 option disappears from the Time Request options). The top-ranked ~1/2 of each month's programs will be assigned band 1, the next ~1/2 band 2. We aim to accept FT programs that stand a good chance of being completed, and we will attempt to complete all of them. However, in case of poor weather etc. using the ranking band system will allow us to prioritize programs according to how they were rated by their peers. The usual queue program completion rate goals by science band are not relevant to the FT program.

How does Fast Turnaround differ from Director's Discretionary Time?

- Currently, DD time is specifically aimed at urgent, high-impact, and risky proposals;

- DDT proposals are scientifically and technically assessed by the Gemini Director of Science or her/his designees;

- Accepted DDT programs become part of the normal queue observing process;

- Accepted DDT programs usually have Band 1 priority and are almost certain to be observed (or attempted);

- The timeline for a DDT proposal to be assessed and (if accepted) its Phase II to be prepared can be significantly shorter than the FT timeline.

Can Target of Opportunity (ToO) observations be applied for?

Both standard and rapid ToO observations can now be applied for through FT proposals.

How much time is being used for the FT program?

Each partner except Chile contribute up to 10% of their available time at Gemini North and South. Using the science time given in the 2021B time distribution (for example), this translates to approximately 260 hours in 2021B, or about 4.5 nights/month (before weather loss). Please contact your National Gemini Office to check how much time is left for FT.

Should my publications that include FT data acknowledge the FT program, and if so, how?

Not specifically. However, the publication should include the program ID, which includes an FT identifier, as well as one of the standard Gemini acknowledgements. If you would like to refer to the FT program in a publication, please use these SPIE proceedings.

How can I give feedback?

We would really like to hear your opinions about what does and doesn't work. Feedback forms will be sent to all applicants, but (potential) users should also feel free to contact the FT team directly (fast.turnaround at gemini.edu)